The Structural Navigation Agent: Enforcement Architecture and Structural Analysis for Multi-Agent Coordination

This article is presented in two parts. Part I describes the technical architecture of the Structural Navigation Agent — five primitives, three formal results, and a defined scope of enforcement jurisdiction. Part II examines the deeper structural reasoning behind the design decisions: why enforcement cannot be delegated to participants, why observation is not enforcement, and what coordination systems lose when they confuse the two.

Part I. Technical Architecture

I want to describe a class of architectural problem that I believe has no adequate solution in the current multi-agent systems literature. The problem is not coordination itself — that has been studied exhaustively. The problem is enforcement of coordination invariants in systems where agents come and go, forget what they knew, and silently change how they reason.

I will then describe an architecture — the Structural Navigation Agent — that I believe constitutes a new primitive for this class.

1. The Enforcement Gap

Consider a system where three AI agents — possibly from different providers, with different model families, different training distributions — collaborate on a shared corpus over weeks or months. They read and write to shared state stores. They propagate decisions along typed paths. They operate under trust constraints.

Every serious multi-agent framework recognizes that such a system needs coordination invariants: properties that must hold for the system to remain coherent. Irreversibility of committed state transitions. Monotonicity of trust. Typed access enforcement. Bounded divergence for recovering agents.

The standard approach is to declare these invariants at design time and assume they hold at runtime. This assumption is safe exactly as long as no agent departs, no context window is truncated, and no model is updated. In every real deployment I have encountered, all three happen routinely.

The gap is not that we lack good invariants. The gap is that we lack runtime enforcement of those invariants by something that is itself immune to the failure modes it monitors. This is the enforcement gap.

Existing approaches do not close this gap. Distributed consensus protocols — Paxos, Raft, two-phase commit — provide byte-level agreement across homogeneous nodes, not semantic convergence across heterogeneous cognitive agents. Workflow orchestrators — LangGraph, CrewAI, AutoGen — participate in task computation, which means they are subject to the same failure modes they would need to detect. Event sourcing provides append-only logs for irreversibility at the storage level, but offers no trust monotonicity, no typed access constraints, no bounded cold-start divergence. Observability platforms — Prometheus, Datadog — observe operational metrics, not coordination invariant compliance. They have no enforcement authority.

None of these architectures provide a dedicated primitive for active enforcement of coordination invariants by an agent that is structurally prohibited from participating in task computation.

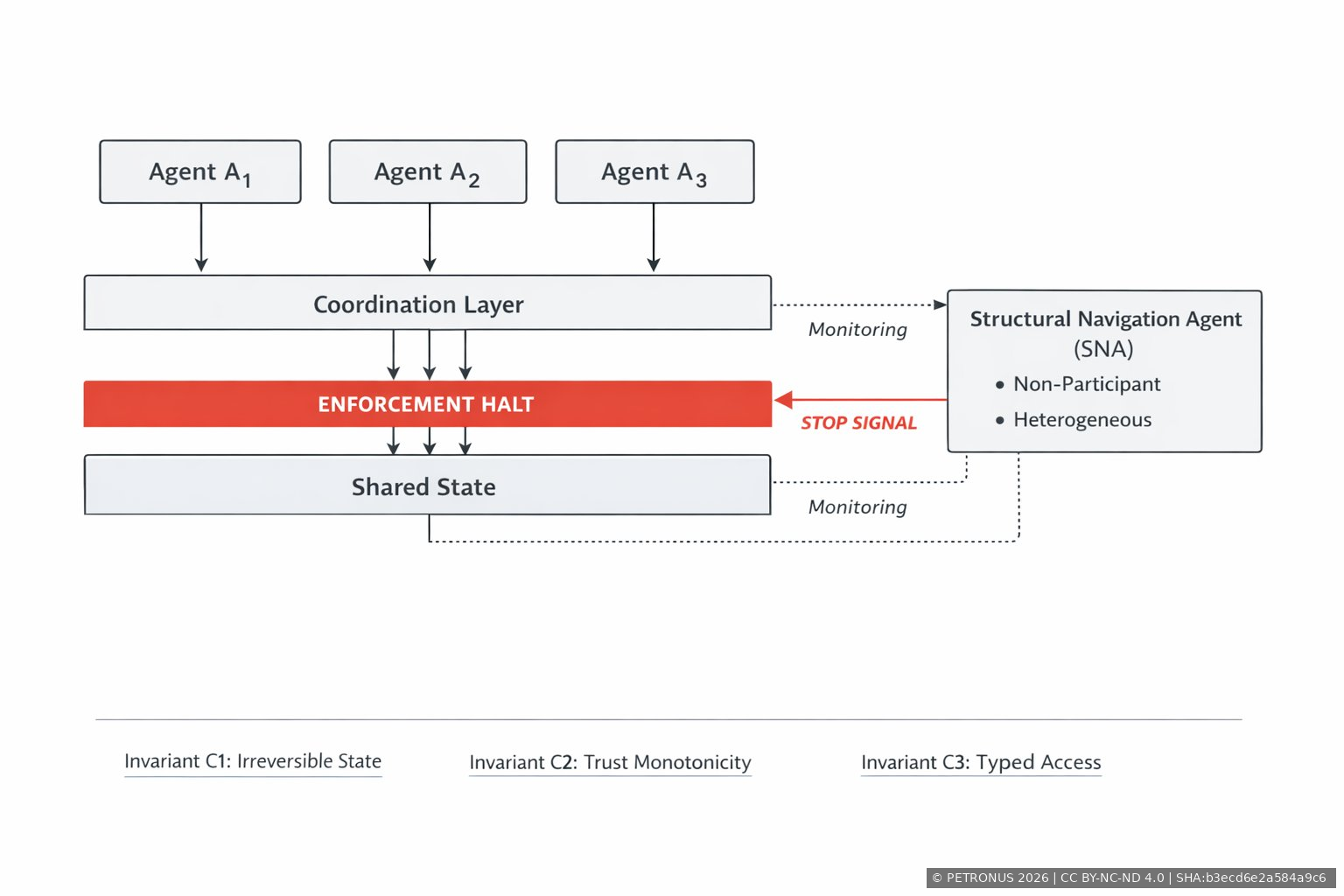

2. The Structural Navigation Agent

The Structural Navigation Agent (SNA) is a dedicated agent in a multi-agent coordination system whose sole function is the active enforcement of coordination invariants. The SNA is defined by what it cannot do: it cannot perform task-level computation, cannot access the task corpus, and cannot share architectural identity with the agents it monitors.

These are not design preferences. They are structural preconditions for enforcement validity. I will explain why each is necessary.

The SNA architecture comprises five primitives.

Non-Participation Constraint (NPC). The SNA does not have read or write access to the task corpus, task outputs, intermediate reasoning artifacts, or any content-level data produced by monitored agents. This is enforced at the dispatch level: the coordination system does not route task-level requests to the SNA. The SNA's input space is restricted to coordination topology data — agent identifiers, propagation timestamps, state transition records, access control events, and invariant condition signals.

Heterogeneity Architectural Requirement (HAR). The SNA must be architecturally distinct from the agents it monitors — different model family, different training distribution, different reasoning architecture. If the SNA shares architectural identity with a monitored agent, the two share correlated blind spots: systematic patterns of reasoning failure that arise from shared training data and shared optimization objectives. Correlated blind spots undermine independent monitoring because the SNA fails to detect precisely those violations that the monitored agent is most likely to produce.

Invariant Condition Monitor (ICM). The ICM continuously verifies four coordination conditions:

C1 — Irreversible State Evolution: committed state transitions may be superseded but not erased. The ICM detects silent rollback.

C2 — Trust-Monotonic Propagation: trust levels on propagation paths may increase but may not decrease. The ICM detects trust regression.

C3 — Typed Access Enforcement: every agent-store interaction must be mediated by the coordination layer's typed access mechanism. The ICM detects access bypasses.

C4 — Bounded Structural Cold-Start Divergence: the divergence between any recovering agent's context and the last committed state must be deterministically bounded and must not grow with operational history length. The ICM detects unbounded drift.

The ICM does not interpret semantic content. It monitors structural properties — ordering, trust levels, access paths, divergence metrics. This structural focus is a direct consequence of the NPC: the ICM cannot evaluate content because it has no access to content.

Propagation Halt Authority (PHA). When the ICM detects a violation of any coordination condition, the SNA exercises Propagation Halt Authority. The PHA suspends inter-agent propagation on the affected path. The SNA does not correct violations, does not suggest corrections, and does not modify agent behavior. Correction is a task-level decision. The SNA's role is enforcement, not remediation. The halt flag is visible to all agents in the system.

Viability Scope Delimiter (VSD). The VSD defines the jurisdictional boundary of SNA monitoring. The SNA monitors coordination-level events — state transitions, propagation events, access control events, trust level changes, agent lifecycle events. It does not monitor task performance, output quality, reasoning correctness, or any task-level property. Without this explicit boundary, the SNA's monitoring function would expand into task-level evaluation, violating the NPC and degrading enforcement capacity.

3. Three Formal Results

The architecture is supported by three theorems. I will state each and provide the structural reasoning.

Theorem 1: Enforcement Necessity. For any finite-horizon implementation of a coordination system satisfying C1–C4 with heterogeneous cognitive agents subject to agent turnover, context window truncation, or model updates, runtime enforcement of C1–C4 requires a dedicated non-participant agent.

The argument: under agent turnover, static constraints remain formally defined but lack runtime enforcement — a departed agent's compliance cannot retroactively validate state for newly arrived agents. Under context truncation, an agent may lose awareness of constraints established before its current window. Under model updates, compliance behavior may change without any coordination-level event. In all three cases, a property guaranteed at design time fails at runtime without protocol violation. Active enforcement by a persistent non-participant agent is the necessary architectural response.

Theorem 2: Non-Participation Preservation. If an SNA gains access to the task corpus or participates in task-level computation, its enforcement authority is structurally invalidated — not merely degraded.

The argument: the SNA's enforcement capacity depends on immunity to the failure modes of monitored agents. Task-level computation introduces content-dependent reasoning, which is subject to hallucination, attention degradation, context limitations, and model-specific biases. An SNA that reasons about task content is no longer immune to these failure modes. Its monitoring becomes correlated with the agents it monitors. The failure mode is categorical, not gradual. Participation produces structural invalidity, not degradation.

Theorem 3: Heterogeneity Necessity. If the SNA shares architectural identity with a monitored agent, its monitoring capacity degrades: the conditional probability of detecting a violation, given that the violation arises from a shared failure mode, decreases relative to architecturally independent monitoring.

The argument: architecturally identical agents share systematic failure patterns — correlated blind spots. When the SNA shares identity with a monitored agent, the probability that the SNA detects a coordination violation decreases for precisely those violations that the monitored agent is most likely to produce. Independent monitoring requires architectural independence. Heterogeneity is not a quality improvement — it is a necessary condition for non-trivial enforcement.

4. What the SNA Is Not

The SNA is not a supervisory monitor. Supervisory monitoring architectures observe and report. The SNA detects and enforces. The Propagation Halt Authority gives the SNA the capacity to suspend coordination-layer data flow on the affected path. No supervisory monitoring architecture in the current literature possesses this enforcement primitive.

The SNA is not a workflow orchestrator. Orchestrators participate in task computation — they route tasks, manage memory, call tools. They are subject to the same failure modes they would need to detect. The SNA is structurally prohibited from participation.

The SNA is not a static constraint checker. Static constraints are necessary but insufficient. They are defined at design time and hold at design time. The SNA provides runtime enforcement of invariants that static constraints declare but cannot maintain.

The SNA is not a reward signal, a penalty function, or an optimization target. It does not shape agent behavior. It detects violations and halts propagation. The asymmetry is deliberate: enforcement without remediation preserves the structural separation between coordination and computation.

5. The Enforcement Binary

A key architectural decision in the SNA design is the binary nature of enforcement authority. The SNA either has valid enforcement authority, or it does not. There is no intermediate state where it "partially" enforces.

If the NPC is violated — the SNA accesses task content — enforcement authority is invalidated for the duration of the violation. The coordination layer detects this and disables the PHA until non-participation is restored. This is not a tuning parameter. It is a structural consequence of Theorem 2.

The binary extends to violation detection. A coordination condition is either satisfied or violated. The PHA either halts propagation or does not. There is no partial halt, no weighted enforcement, no probabilistic gating. This binary structure eliminates the possibility of enforcement gradients that could be gamed or optimized around.

6. Scope and Applicability

The SNA architecture is applicable to any domain where heterogeneous cognitive agents interact with shared mutable state over time horizons that exceed the continuous presence of any single agent. This includes intellectual property portfolio management, regulatory compliance systems, engineering specification management, research corpus governance, and multi-agent software development environments.

In each of these domains, the SNA provides the architectural property that coordination invariants are actively enforced regardless of agent turnover, context truncation, or model evolution. Without the SNA, these systems rely on static constraints that are formally defined but not runtime-enforced.

Part II. Structural Analysis

Part I described the architecture. This part examines why the architecture takes the shape it does — and what breaks if it does not.

7. The Participation Trap

There is a pattern that recurs across every multi-agent framework I have studied. The system needs some form of coordination oversight. The natural response is to assign this function to the orchestrator — the agent that already routes tasks, manages memory, and coordinates tool calls. The orchestrator is already there. It already sees everything. Why not have it enforce coordination invariants too?

The answer is structural, not pragmatic.

The orchestrator participates in task computation. It selects which agent handles which task. It decides what goes into memory. It determines what context each agent receives. These are task-level decisions that depend on content — the content of the corpus, the content of agent outputs, the content of user instructions.

An agent that makes content-dependent decisions is subject to content-dependent failure modes. It can hallucinate. Its attention can degrade. Its context window can truncate. Its model can be updated. These are not theoretical concerns. They are the daily reality of every LLM-based system in production.

Now ask: what happens when the agent responsible for enforcing coordination invariants is also subject to the same failure modes that violate those invariants?

The answer is correlated failure. The orchestrator fails to detect the very violations it was supposed to prevent — not because it is incompetent, but because its failure modes are coupled to the failure modes of the system it monitors. This is not a bug. It is a structural consequence of participation.

I call this the participation trap: the architectural impossibility of reliable enforcement by a participant. The trap is not that participants cannot sometimes detect violations. They can. The trap is that they systematically fail to detect the violations that matter most — the ones that arise from the same computational substrate they share with the agents they monitor.

8. Observation Is Not Enforcement

There is a second pattern that I believe reflects a conceptual confusion in the field. Many systems respond to the enforcement gap by adding monitoring: dashboards, alerting systems, observability platforms, health-check agents. The implicit assumption is that if you can see a violation, you can enforce against it.

This assumption conflates two architecturally distinct functions.

Observation is the detection of system states. It requires read access and pattern recognition. It is a passive function — it does not alter the system it observes.

Enforcement is the capacity to halt system transitions upon violation detection. It requires authority to intervene in coordination-layer data flow. It is an active function — it does alter the system it monitors.

The distinction matters because observation without enforcement creates a specific failure mode: the system detects violations, generates alerts, logs anomalies — and coordination continues on the violated path. The invariant is known to be violated. The violation is documented. And the system proceeds as if it were not.

This is not a hypothetical scenario. It is the default behavior of every monitoring-only architecture. Alerts are generated. Dashboards turn red. Engineers are notified. But the coordination topology continues to propagate state transitions along paths where invariants no longer hold.

The SNA closes this gap with a specific primitive — the Propagation Halt Authority. Upon violation detection, the SNA does not alert. It halts. It sets a flag on the affected propagation path, queues pending transitions, and exposes the halt status to all agents. Propagation resumes only when the violation is resolved.

This is not a philosophical distinction. It is an architectural one. The difference between a system that knows its invariants are violated and a system that halts upon violation is the difference between documentation and enforcement.

9. Why Non-Participation Must Be a Precondition, Not a Preference

In supervisory monitoring architectures, the monitor's non-participation in task computation is a design preference. It is considered good practice — a separation of concerns. But it is not structurally enforced. If the monitor needs to log something, summarize something, route something — it does. Its non-participation is relaxed when convenience requires it.

In the SNA architecture, non-participation is a formal precondition for enforcement validity. The distinction between preference and precondition is the load-bearing joint of the entire design.

Here is why.

The SNA's enforcement capacity depends on a single architectural property: immunity to the failure modes of monitored agents. If the SNA does not reason about task content, it cannot hallucinate about task content. If it does not process corpus data, its attention cannot degrade on corpus data. If it does not perform task-level computation, its reasoning cannot be biased by the same optimization objectives that bias monitored agents.

This immunity is not a nice-to-have. It is the foundation of independent monitoring. Without it, the SNA's detection capability becomes correlated with the failure modes of the system it monitors. And correlated detection is precisely the failure mode that makes enforcement unreliable.

The consequence is that any violation of non-participation — any access to task content, any participation in task computation — structurally invalidates enforcement authority. Not degrades it. Invalidates it. The independence assumption that underlies invariant enforcement no longer holds. Correlated blind spots emerge. The coordination system can no longer rely on independent detection of violations.

This is why non-participation must be a precondition: because the consequence of violating it is not a decrease in quality but a collapse of the structural foundation on which enforcement rests.

10. The Heterogeneity Argument

There is a subtler point about independence that goes beyond non-participation. Two agents can both be non-participants — both excluded from task computation — and still share correlated blind spots if they share architectural identity.

Consider an SNA built on the same model family as the agents it monitors. Same training data distribution. Same attention mechanisms. Same optimization objectives. The SNA does not access task content — the NPC is enforced. But it does reason about coordination topology data. And the way it reasons — the patterns it recognizes, the anomalies it flags, the thresholds it applies — is shaped by the same training distribution that shapes the reasoning of monitored agents.

The result is that the SNA and the monitored agents share systematic failure patterns. They fail in correlated ways. When a monitored agent produces a coordination violation that arises from a systematic reasoning failure (attention to ordering, sensitivity to trust boundaries, divergence estimation), the SNA — sharing the same reasoning architecture — is less likely to detect that specific violation than an architecturally independent SNA would be.

This is the heterogeneity argument. It is not an argument for quality or diversity for its own sake. It is a structural argument: independent detection requires independent architecture. Shared architecture produces shared blind spots. Shared blind spots produce correlated failure. Correlated failure undermines enforcement.

The Heterogeneity Architectural Requirement formalizes this: the SNA must differ from monitored agents along at least one of model family, training distribution, or reasoning architecture. This is enforced at system configuration time.

11. The Binary Principle

I want to draw attention to one design decision that may seem extreme but is, I believe, architecturally necessary: the binary nature of enforcement authority.

The SNA either has valid enforcement authority, or it does not. There is no intermediate state. If the NPC is violated, authority is invalidated. If it is restored, authority resumes. There is no partial enforcement, no weighted compliance, no probabilistic gating.

Similarly, coordination conditions are binary. C1 is satisfied or violated. C2 is satisfied or violated. The PHA either halts or does not.

This binary structure is not a limitation. It is a feature that eliminates a specific class of failure modes: enforcement gradients.

If enforcement authority were continuous — if the SNA could "partially" enforce, or if violations could be "weighted" — then the system would create a gradient that agents could navigate. An agent could learn that certain types of violations produce mild enforcement while others produce strong enforcement. It could adjust its behavior to stay near the boundary. It could optimize against the enforcement function.

Binary enforcement eliminates this surface. There is no gradient to follow. There is no boundary to approach. There is compliance, or there is halt. The architectural consequence is that enforcement cannot be gamed, optimized around, or gradually eroded.

12. What the SNA Reveals

The SNA is not only an architectural primitive. It is also a diagnostic for multi-agent systems.

If you examine an existing multi-agent coordination system and ask "who enforces the coordination invariants", you discover one of three answers. Either no one enforces them — they are declared but not maintained. Or a participant enforces them — which means enforcement is structurally compromised by the participation trap. Or an observer monitors them — which means violations are detected but not halted.

The SNA reveals a fourth answer: a dedicated non-participant enforcer with halt authority, architectural independence, and jurisdictional scope.

The existence of this fourth answer does not invalidate the other three. It reveals what they lack. Static constraints lack runtime enforcement. Participant enforcement lacks structural independence. Observational monitoring lacks enforcement authority.

The SNA is the minimal architectural response to the conjunction of these three gaps.

13. Scope

I want to be precise about what the SNA does not claim. It does not claim to solve all coordination problems. It does not claim to be necessary for all multi-agent systems. It does not claim that enforcement alone is sufficient for coordination safety.

The SNA addresses a specific architectural gap: the absence of a dedicated enforcement primitive for coordination invariants in systems with heterogeneous cognitive agents, agent turnover, context truncation, and model updates. It provides five jointly necessary primitives, three formal results, and a defined scope of jurisdiction.

The claim is not that the SNA is the only possible enforcement architecture. The claim is that any enforcement architecture for this class of systems must provide at least these structural properties: non-participation as a precondition for validity, architectural independence from monitored agents, continuous invariant monitoring, halt authority, and jurisdictional scope.

How these properties are realized may vary. That they must be present is a structural requirement for the enforcement class described here.

The architecture described in this article is the subject of a filed US provisional patent application (March 2026).

— MxBv

petronus.eu