The New Reality

In 2024, a team of three could write maybe 50 pages of polished documentation per month. In 2026, that same team generates 500. AI made writing nearly free. But it made something else nearly invisible:

The connections between what you've written.

When you write slowly, you remember what you said on page 12 when you reach page 47. Your brain maintains a rough map of your own claims, definitions, and commitments. When AI writes for you — or with you — that map doesn't exist. You didn't write page 12. You prompted it. Three weeks and forty conversations ago.

Now multiply this by reality. You don't have one document. You have a pitch deck, a technical specification, a regulatory filing, a patent application, a compliance manual, an API reference, and a partnership agreement. Each generated in different sessions, with different prompts, sometimes with different AI models.

Is the term "user data" defined the same way in your privacy policy and your API docs? Does your patent claim contradict your published whitepaper? Does your compliance manual reference a process that your technical spec actually forbids?

You don't know. Nobody knows. There is no tool that checks this.

Why Your Current Tools Can't Help

"But I use RAG / AI search / embeddings."

RAG answers the question: "What does my corpus say about X?" It finds relevant fragments. That's powerful for retrieval. But retrieval is not verification.

RAG cannot answer: "Is my corpus internally consistent?" — because that's not a query. There's no prompt you can write that says "find every contradiction I don't know about." Contradictions are structural. They live in the relationships between documents, not inside any single one.

"But I can ask ChatGPT to check."

No, you can't. Your corpus is 300 pages. ChatGPT's context window is — let's be generous — 128K tokens. That's roughly 100 pages of dense text. Your corpus doesn't fit. And even if it did: loading a document into a context window is reading, not verification. The model will catch obvious contradictions in adjacent paragraphs. It will miss the one where your definition on page 14 quietly conflicts with an assumption on page 203.

"I'll use a sliding window / map-reduce approach."

Sliding windows maintain local context within each window. They cannot maintain cross-document dependency chains. Map-reduce assumes your combination operation is associative and commutative. Structural consistency verification is neither: dependency resolution order matters, and two individually consistent sub-corpora can be mutually inconsistent at their boundary.

"What about knowledge graphs?"

Traditional knowledge graph extraction requires a pre-defined ontology. It identifies entities by string matching or embedding similarity — probabilistic and non-deterministic. It doesn't tell you "these two documents define the same term differently." It doesn't trace a claim-dependency chain across five documents to find the one broken link.

Every existing approach treats documents as bags of content to be searched, summarized, or indexed. None of them treats a document corpus as a structural system that can be verified for internal consistency — the way a compiler checks code.

The Problem No One Named

Here's what's actually happening. Every time you generate a document, you're making structural commitments: you define terms, you make claims, those claims depend on other claims, those claims assume certain conditions, and they operate within certain boundaries.

When you generate the next document, you make more commitments. Some of them reference the first document's commitments. Some of them silently contradict them. Some of them use the same words to mean different things.

This is called terminological drift, and it's the silent killer of large document corpora. Not because anyone intended it, but because no tool tracks it.

In software engineering, we solved this decades ago. You don't ship code without a compiler checking type consistency, a linker resolving dependencies, and a test suite verifying contracts. The equivalent for documents doesn't exist.

Until now, it didn't need to. Documents were written slowly enough that human memory served as the linker. That era is over.

What a Solution Would Look Like

Imagine uploading 10, 50, or 200 documents to a system that returns:

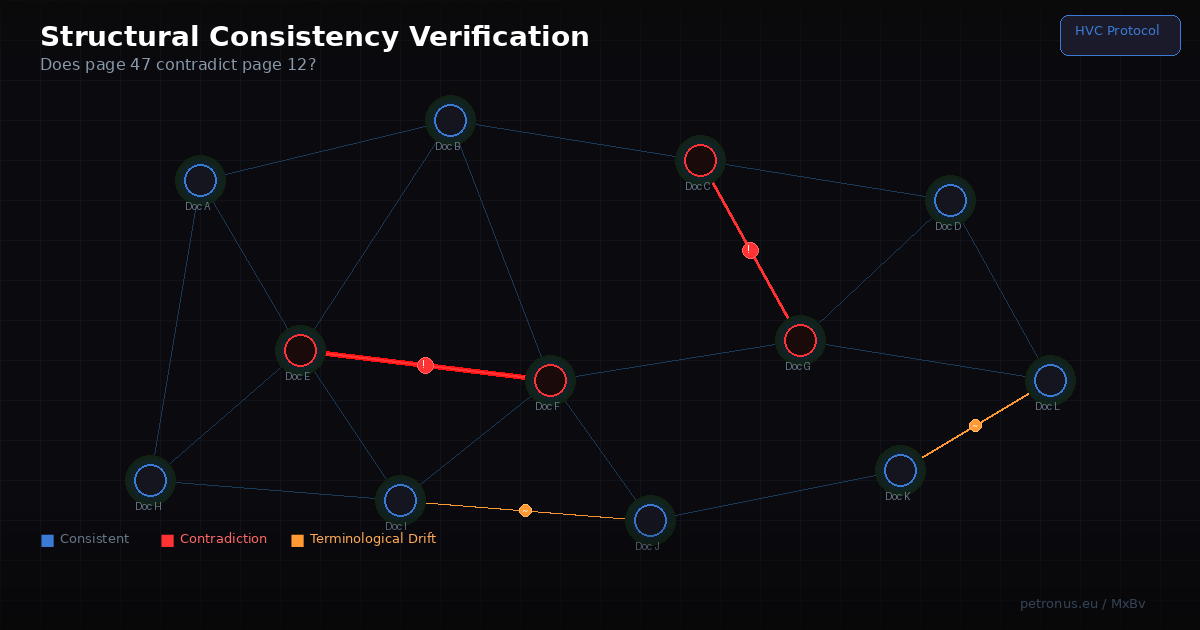

"Here are your 7 term conflicts" — places where the same concept is defined differently across documents, with exact source locations.

"Here are your 3 claim contradictions" — places where Document A asserts something that Document B denies, with the chain of reasoning that connects them.

"Here are your 12 broken dependency chains" — places where a claim in one document depends on a definition or assumption in another document that was never actually stated, or was stated differently.

"Here is your regime conflict" — places where two documents operate under incompatible assumptions about scope, jurisdiction, or applicability.

Each flagged issue comes with a minimum proof: just the conflicting elements, their immediate context, and the shortest path connecting them. Not a 50-page report. A focused, bounded, verifiable unit — typically under 2,000 words — that you can review and resolve in minutes.

The system doesn't read your documents the way a human would. It compiles them. It extracts structure, builds a cross-reference graph, and runs typed verification queries against that graph. The verification cost scales linearly with the number of structural relationships — not quadratically with the number of documents.

A corpus of ten million tokens can be structurally verified by models with context windows as small as 4,000 tokens. The architecture doesn't fight the context window limit. It makes it irrelevant.

Who Needs This Right Now

Patent attorneys managing portfolios of 50+ related patents where one contradictory claim can invalidate an entire family.

Regulatory compliance teams preparing filing suites where a single inconsistency between documents triggers rejection and months of rework.

Startup founders who generated their entire documentation stack with AI and need to know it's internally consistent before sending it to investors, regulators, or partners.

Engineering teams maintaining specification suites where a changed requirement in one document cascades through a dozen others.

Academic researchers publishing paper series where terminological drift between publications undermines the theoretical framework.

Consultants and agencies delivering multi-document client deliverables where internal contradictions destroy credibility.

Legal teams reviewing contract suites where an inconsistent definition across agreements creates exploitable loopholes.

Anyone who writes more than they can remember.

Which, in 2026, is everyone.

What Exists Today

A provisional patent (filed February 2026) covers the core architecture: hierarchical structural surrogate construction, deterministic term identification via cryptographic hashing, batched graph-query verification, and bounded proof slice extraction. The research behind it spans two years of formal work in adaptive systems theory.

An MVP is in development. The first test corpus: 42 published research papers totaling over one million tokens. Real terminological drift. Real dependency chains. Real structural verification.

What Comes Next

If this problem resonates — if you've ever sent a document package and later realized something in Document C contradicted something in Document A — then you know this isn't a nice-to-have. It's infrastructure that should have existed the moment AI started writing for us.

Follow the development at petronus.eu. The first public demo is coming.

And in the meantime: go check page 47 against page 12. You might not like what you find.

Maksim Barziankou (MxBv) is the architect of Navigational Cybernetics 2.5 and the inventor of the Hierarchical Verification Compression protocol. He builds structural tools for long-horizon adaptive systems at petronus.eu.